Generally speaking, social networks aren’t known for deeply enriching the lives of users. As I wade through my Facebook feed, I find myself hip deep in memes, bad lip reading videos, and ads for Warby Parker. Pinterest is a little better, although visiting the site is like going on a bad LSD trip hosted by “Real Simple”. Some of the newer networks, like Overtime, are trying to cater to specific user groups, but a lot of the same drivel still clogs the space.

Of course, Zuck and his minions aren’t exactly a charity.

But what happens when MIT, Harvard, and Microsoft come together to build a “social network”?

You get TalkLife.

The social network brands itself as a safe place to get and give help.

With the goal of better understanding, predicting, and preventing self-harm, TalkLife is aimed squarely at youth struggling with mental illness, depression, or anything else that might lead them to inflict harm on themselves.

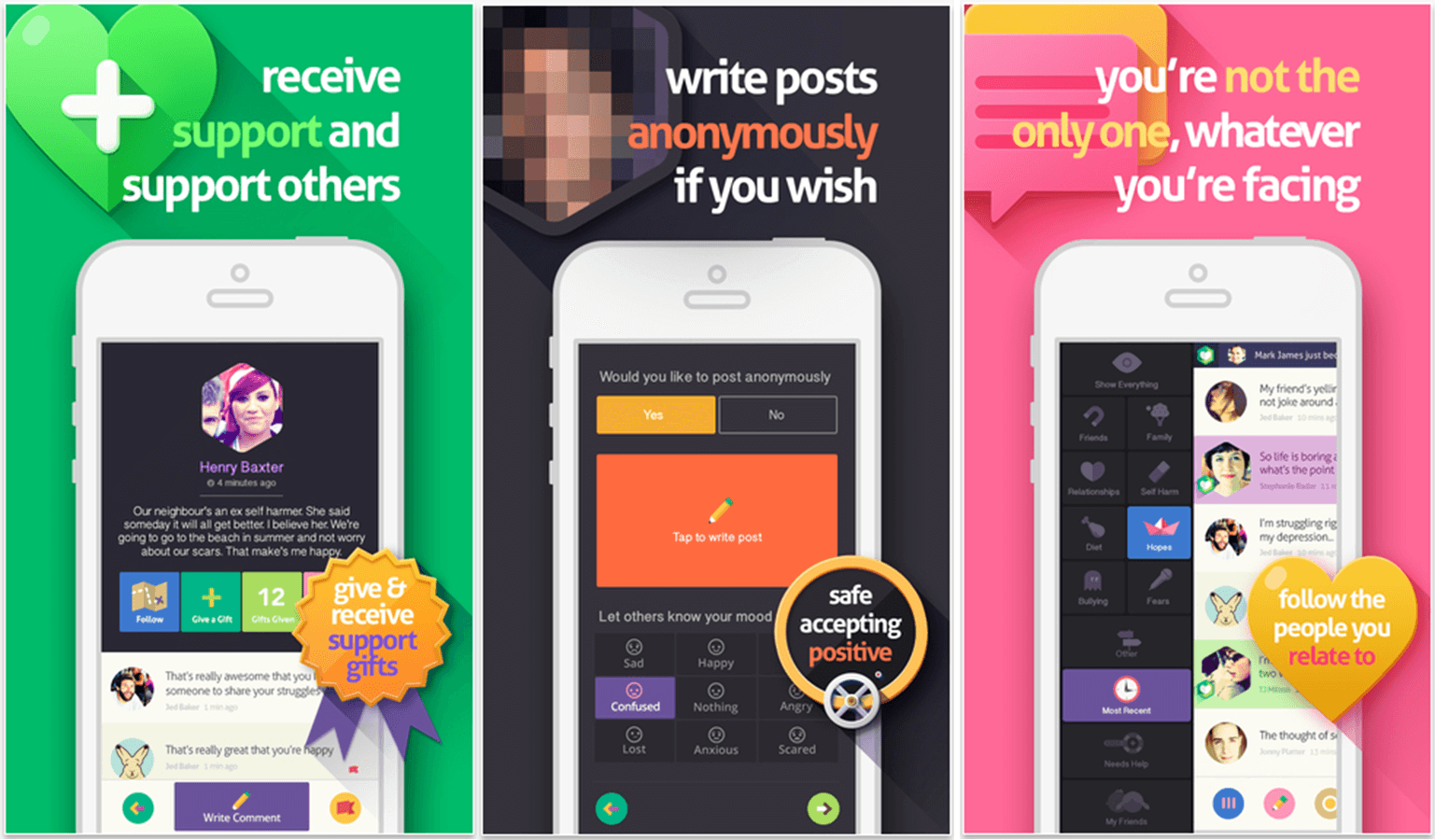

The app allows users to post, either personally or anonymously, about problems they are experiencing at school, home, work, or in any other area of their lives. Other users can then give support to the user through encouraging posts and “support gifts” (digital stickers of various kinds).

All forms of bullying are expressly prohibited, and volunteer “Anti-Bullying Moderators” work to remove offensive content from the feeds.

Perhaps the most interesting part TalkLife is not the social network itself, but the research happening behind the network. Using the data provided by users, the team behind TalkLife is actively researching how to better prevent self-harm in today’s youth.

Their website states:

We imbue our models with the knowledge of current theoretical models in clinical psychology, choose model specifications that respect the sparsity, noise and complexity of the observed phenomena, and extensively critique our models with an aim of forging a better understanding of the risk-factors and behaviors underlying self-harm given anonymised behavioral data of thousands of people self-reporting engagement in self-harm. Our hope is to not only better understand their plight, but also eventually craft meaningful interventions that we hope will help these individuals.

In other words, MIT, Harvard, and Microsoft Research have banded together to, when possible, stop youth from harming themselves.

The company has raised $655k in funding, with $370k coming in the most recent round. They have about 175K users across over 100 countries.

Despite all the good vibes going on at TalkLife, with complex mental health may come complex business challenges.

What obligation does TalkLife have to take action and report issues discussed by users? They say that their machine learning will enable intelligent intervention from trained professionals (though not employees of the company). The app also makes it possible for users to directly call emergency services, and they are working with Cacet Global to prevent child abuse. But how people perceive their responsibility may be what causes turbulence.

Unlike Facebook, who invites users to post about anything and everything, TalkLife specifically invites users to post about the worst parts of their lives. At some point, FirstTalk may just get sued by the family of a user who has taken their own life after posting explicit warnings. If that happens, how will that affect the company? Again, legally, maybe very little, but it may shake up their approach.

A second issue is that of censorship. TalkLife “Anti-Bullying Moderators” have the authority to take down any post they deem “offensive”. But as Reddit has discovered recently, determining what is offensive is no small task. Will TalkLife encounter the same outrage as Reddit when moderators remove offensive material? Given the nature of TalkLife versus the nature of Reddit, probably not, but there are bound to be users upset when posts are removed based on informal discretion.

TalkLife is a fantastic idea and with more attention being given to health & wellness applications, it’s in a pretty good space. With MIT, Harvard, and Microsoft on board, they have a backing of solid reputation and finance. But they are navigating an incredibly sensitive world littered with landmines. The success of the company may hinge on how well they handle that navigation.